Implementing SAP Master Data Governance (MDG) gets you the big wins: controlled workflows, approvals, and fewer duplicates. But even with great governance, teams often get stuck with a quieter, and sometimes more expensive, problem. Records that are technically approved, but still incomplete, inconsistent, or outdated.

That’s why Data Quality Management (DQM) matters. In SAP MDG, DQM is less about a single “feature” and more a continues process where you can utilize the toolbox provided by SAP:

- Rules that prevent bad input

- Logic that derives values

- Enrichment from external sources

- Scoring that makes quality measurable

- Remediation tools that help you fix issues at the source

A solid DQM approach should go along with:

- High quality data which leads to better decisions

- More efficient operations which lead to cost savings

- Lower risk and easier compliance which decreases the possibility of fines

- Better analytics and AI integration which leads to more innovation

This post is a general overview of the toolbox to help decide how each capability can support your data goals.

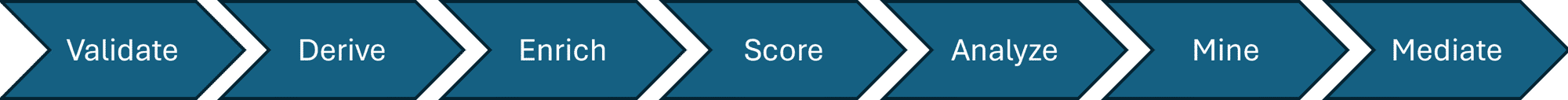

Data Quality Management Toolchain

Validation Rules: Stop Bad Data at the Door

The first functionality is Validation Rule Management. When a business object is created or changed, the record will be checked according to the defined validation rules. If the entered data doesn’t fulfil the requirements, an error message will be thrown. These rules are important because they directly enforce data standards and ensure consistency. For example, if the selected payment method is SEPA, the IBAN field must be completed.

Derivations: Make the “Right Value” the Default

A second functionality is Derivations. With DQM, it is possible to derive complete entities, for example a storage location can be defaulted from its related plant. The same principle follows for field values, when values such as the country and the postal code are entered, the region could be automatically filled in. These derivations help enrich business objects for higher quality data by simplifying the master data processes.

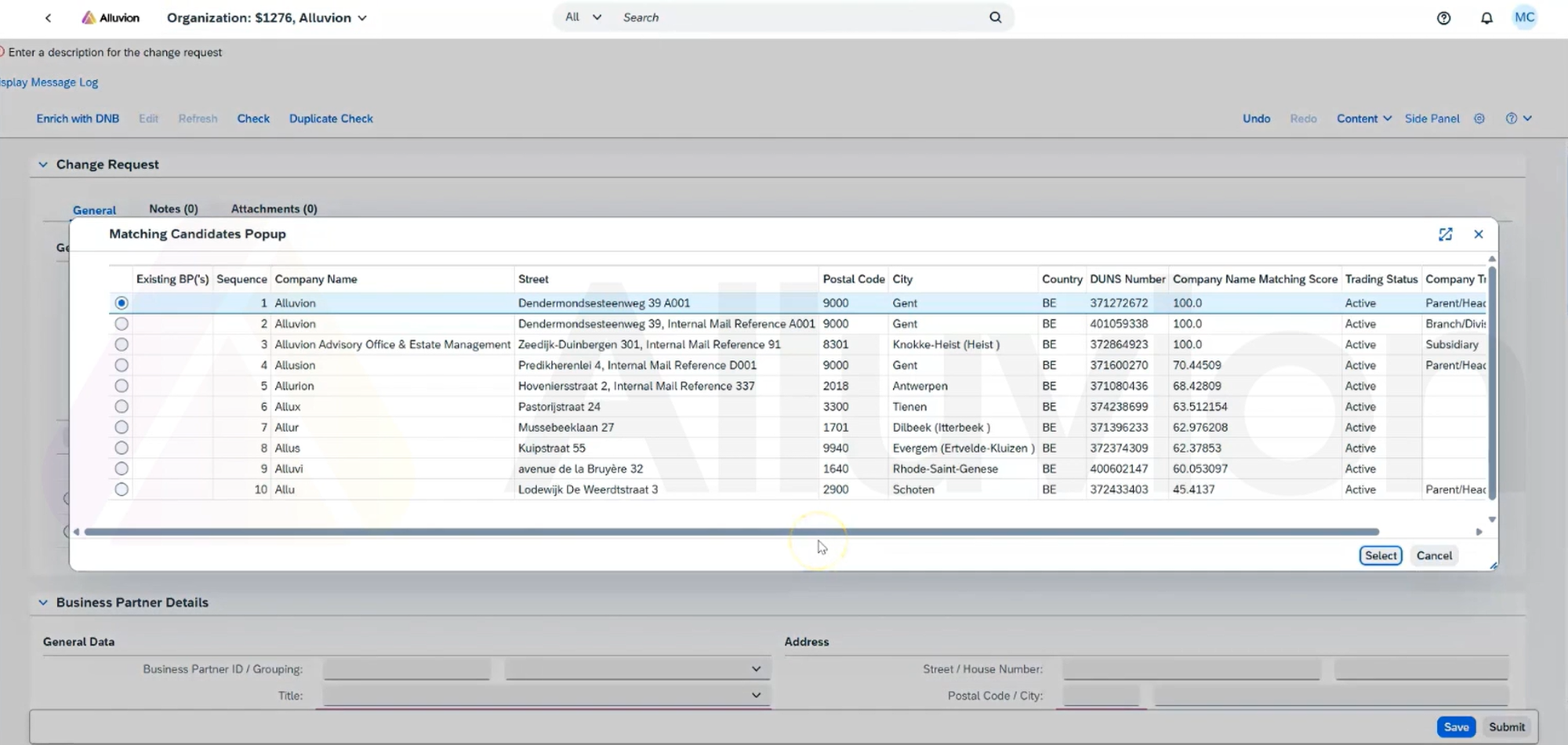

Enrichment: Bring in External Truth

A common problem with data quality is incompleteness, a consequence of human error or miscommunication. A functionality to protect against this problem is to enrich data through external databases, for example with CDQ or Dun & Bradstreet. By connecting MDG to these external databases, you can match existing business objects and enrich them with correct and up-to-date data.

Example of enrichment when creating BP

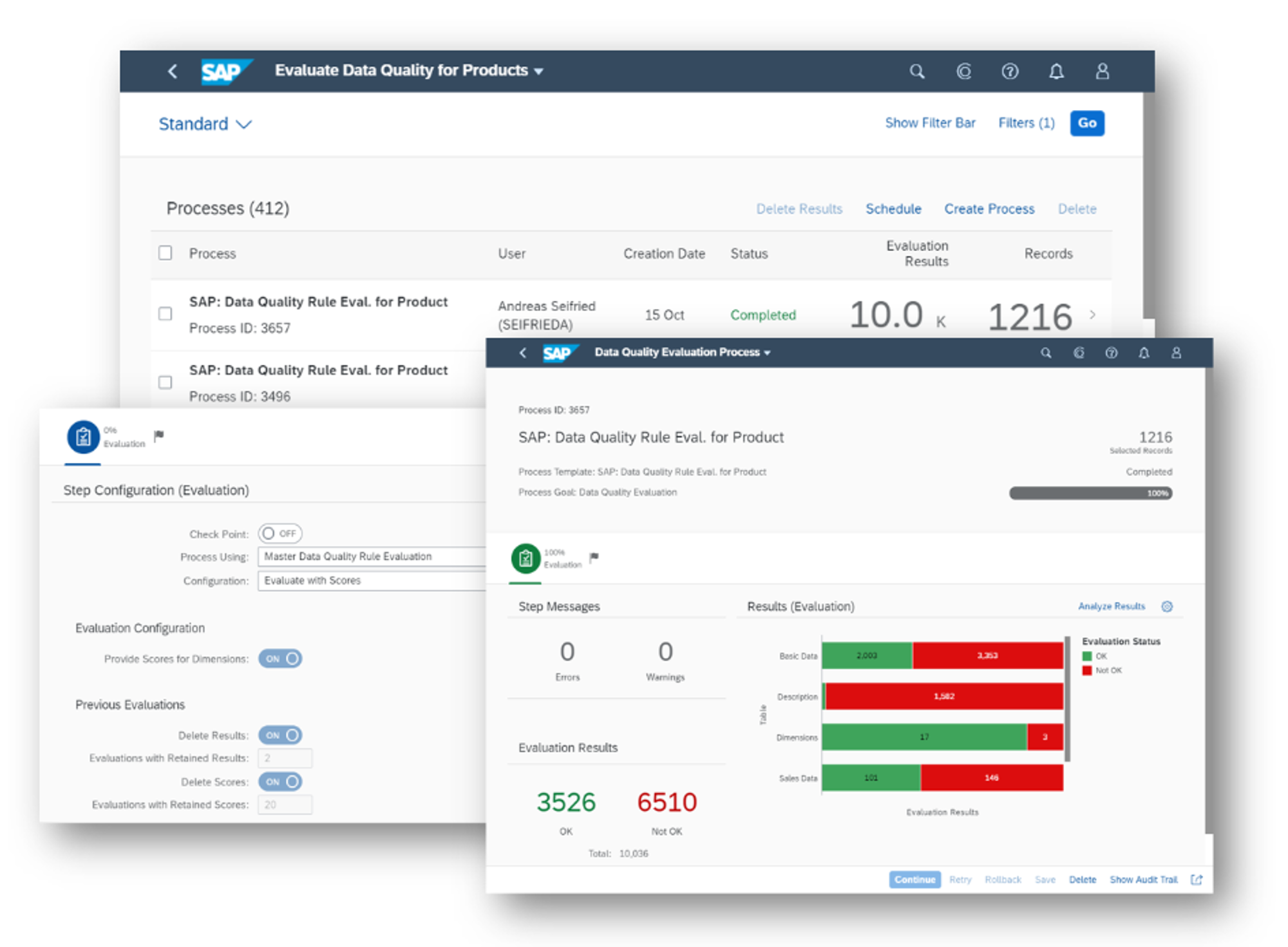

Data Quality Evaluation: Turn Rules into a Measurable Score

The previous functionalities helped when entering clean data, but it is still important to evaluate your data quality on a continuous basis. To visualize the data quality, a score is used based on weighted rules. These rules can be configured based on business needs along six data quality dimensions and should be updated over time as the business needs change.

Based on this evaluation, an analysis can be performed through a dashboard and different KPI’s that allow a broad array of customization. To get a narrower view of a dashboard, you can navigate to the related app by clicking on a card on the overview page.

Various Data Quality Evaluation windows

Data quality analysis

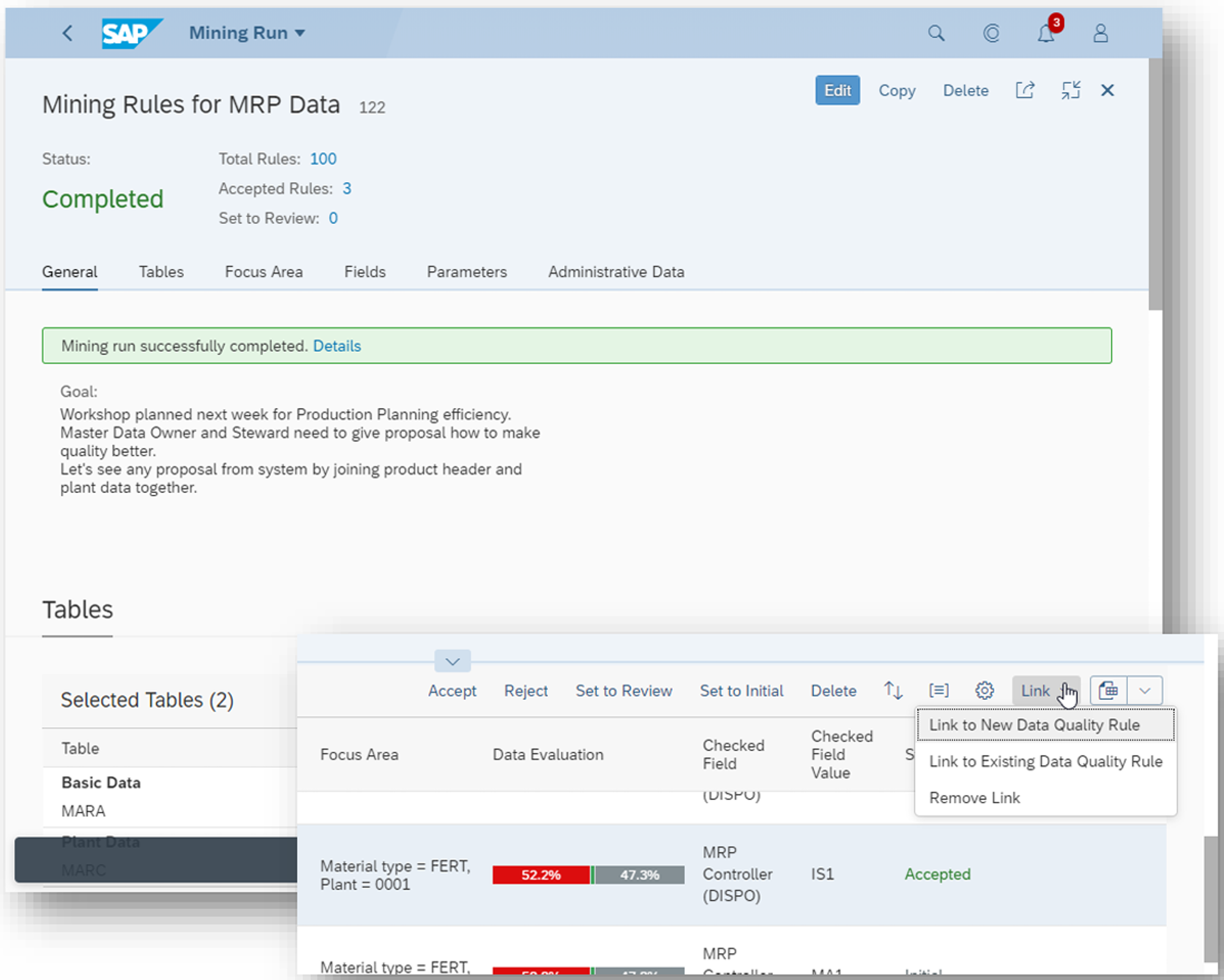

Rule Mining: Accelerate Rule Discovery

Many businesses don't have a formal collection of important quality rules and go through a process of rule discovery. Because this discovery journey can take a long time and might produce suboptimal results, SAP has created the Rule Mining functionality. The existing business objects get analyzed to generate a list of plausible data quality rules, which must then be reviewed by the data governance team to choose the relevant ones.

Rule Mining windows

Remediation: Fix Issues Where You Find Them

Another useful functionality is the possibility to remediate master data. This allows you to correct the data through the data analysis overview applications. As mentioned earlier, it is possible to drill down from the dashboard to a specific analysis. From here, you can see the business objects that are causing a decline in the score value and resolve the problem or delegate it to the person responsible.

Customize for the company's needs

The last functionality that will be mentioned is Custom Fields. These fields can be used in DQM in the same way normal fields can. It allows a company to determine what data needs to be captured and used, resulting in a clearer overview of the master data processes. For example, a custom field to track the source of the record can be added and then used to monitor the quality of different sources. In doing so, the time to discover and resolve errors gets reduced.

Key Takeaways

Data Quality Management is most effective when you treat it as a practical toolbox rather than a one-time setup:

- Using validation rules to prevent bad input, derivations to make consistent values the default, and enrichment to complete or verify critical attributes.

- Turning those standards into something you can manage by running evaluations that produce measurable scores.

- Using analytics to drill from KPIs to root causes and applying remediation to close issues quickly with the right owners.

- Applying rule mining and custom fields to keep the approach scalable, so your ruleset and reporting evolve with the business, not behind it.

If you have any questions don’t hesitate to reach out, we will be happy to clarify and expand on what was explained!

Featured articles